The Gap Nobody Talks About

Every week, somewhere in a Slack channel, a product manager types something like:

"Can we see how our power users are trending?"

And somewhere in a queue, a data analyst stares at that sentence and starts mentally negotiating.

What's a power user? Is that the top 10% by activity? By revenue? By logins? Over what time window — last 30 days, last quarter, rolling 90? Trending how — absolute count, percentage of total, week-over-week delta?

The PM meant something. The analyst interpreted something. The dashboard shows something. And very often, those three somethings are subtly, silently different.

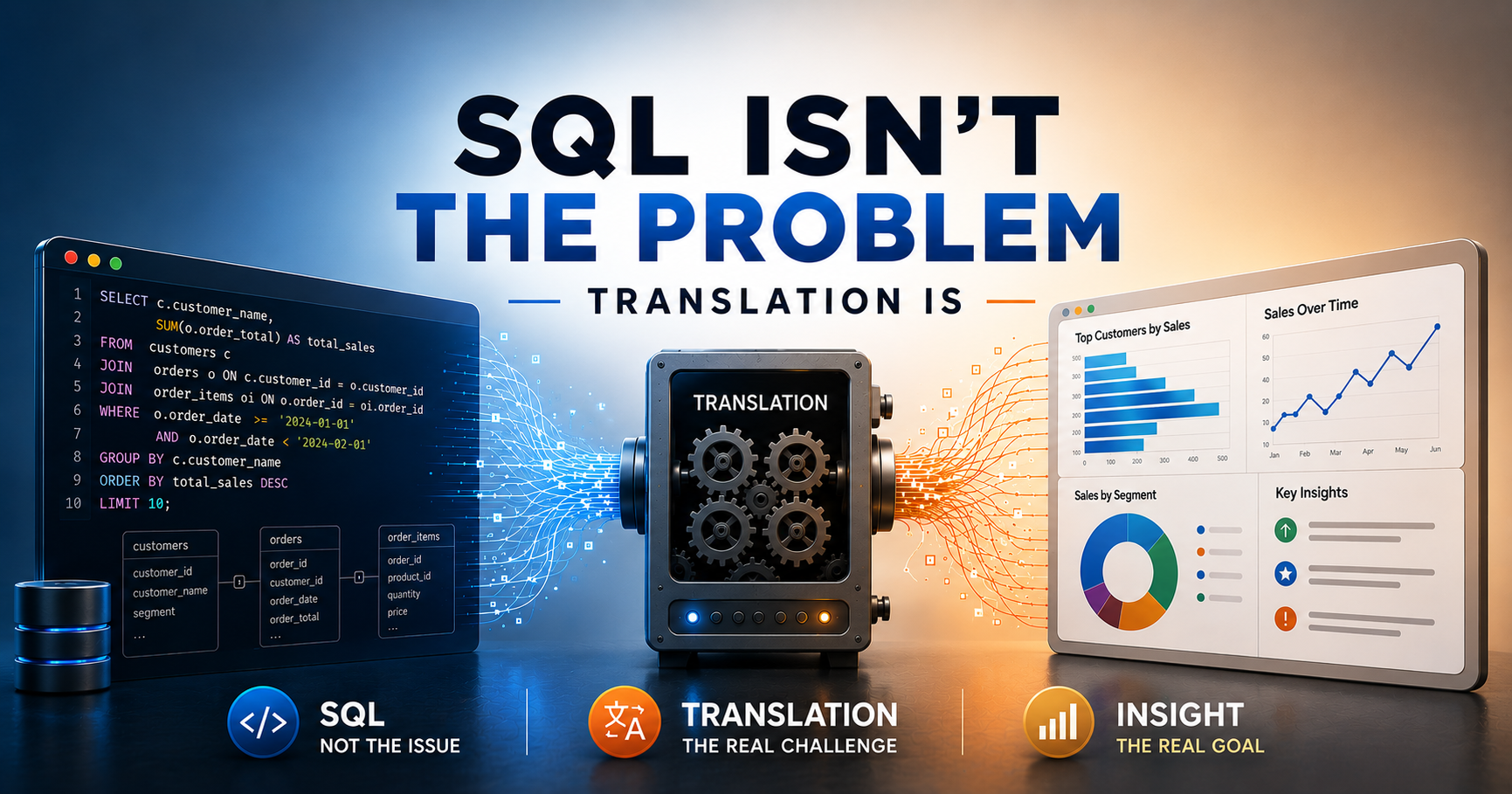

This is the translation problem. And it's quietly responsible for more bad decisions, wasted sprints, and dashboard graveyards than any slow query or missing index ever was.

Two Languages, One Conversation

Business stakeholders operate in the language of outcomes. They think in terms of goals, segments, narratives, and hypotheses. Their questions are inherently fuzzy because business reality is fuzzy.

- "Are customers churning more lately?"

- "Which features drive the most engagement?"

- "Is our new onboarding working?"

These aren't sloppy questions. They're meaningful questions — and that fuzziness is actually important context. It signals what the person cares about and why.

Databases, on the other hand, are ruthlessly literal. They speak in rows, joins, aggregations, and filters. A database has no idea what "lately" means. It doesn't know what counts as "engagement." It cannot infer that when someone says "customers," they mean paid accounts with at least one completed transaction in the last 90 days.

SQL is the bridge — but SQL is also just another language. And every time a question crosses from business-speak to SQL, something gets lost in translation.

| Business Question | What the Analyst Assumes | What Gets Queried |

|---|---|---|

| "How are power users trending?" | Top decile by event count, last 30d | WHERE event_count > 50 AND created_at > NOW() - 30 |

| "Is churn getting worse?" | Monthly cancellations vs. prior month | Voluntary cancels only, ignores payment failures |

| "Which features are most popular?" | Unique users who clicked the feature | Page views, not actual feature engagement |

None of these translations are wrong. But none of them are obviously right, either. They're judgment calls — made quickly, rarely documented, and almost never validated with the original asker.

The Three Translations That Break Analytics

Most analytics workflows involve at least three translation events, each introducing drift.

Translation 1: Intent → Question

A stakeholder has a vague business concern — say, revenue feels soft this month. They need to turn that concern into a specific question they can bring to the data team. But translating a feeling into a question requires them to already have some model of how the data is structured. Most business people don't. So they ask something adjacent to what they actually mean.

Translation 2: Question → Query

The analyst receives the question and translates it into SQL. This is where most of the hidden decisions live. Every WHERE clause is an assumption. Every JOIN hides a definition. Every metric has a denominator that nobody agreed on.

The analyst is making these calls based on their understanding of the data model, their interpretation of the business question, and often — honestly — what's easiest to write. These decisions are rarely surfaced or reviewed.

Translation 3: Query → Insight

The output comes back as a table or a chart. Now the original stakeholder has to interpret it. They apply their own mental model of what the numbers should mean — which may have nothing to do with how the analyst defined the underlying query.

"Wait, why is this number so much lower than what I was expecting?"

And the cycle begins again.

Three translations. Three points of failure. And in most organizations, none of these handoffs are documented. The query runs, the chart gets dropped in Slack, someone nods, and everyone moves on — carrying subtly different understandings of what just happened.

Why SQL Gets the Blame

SQL is old. SQL is verbose. SQL has its quirks — the NULL behaviors, the implicit type coercions, the joins that silently multiply rows. So when an analytics workflow breaks down, it's tempting to blame the query language.

"If only non-technical teams could just write SQL themselves."

But giving a PM direct SQL access doesn't solve the translation problem. It just moves the failure point. Now the PM writes a query that runs — no syntax errors, no crashes — but answers a subtly wrong question because they didn't know about the duplicate event rows, or the soft-deleted records that need to be filtered out, or the timezone normalization your team quietly applies to all timestamps.

SQL literacy is valuable. But it's not the same as data fluency, and data fluency isn't the same as shared definitional clarity.

The real bottleneck isn't the syntax. It's the semantics.

Natural Language Interfaces: The Promise and the Catch

This is where modern AI-powered analytics tools enter the picture — and where the conversation gets interesting.

Natural language interfaces (NLIs) for data promise to collapse the translation layer. Instead of a PM writing a Jira ticket, an analyst writing a query, and an output getting reinterpreted, you just... ask. The system handles the SQL. The insight comes back directly.

And this works — remarkably well — for a certain class of questions. Lookup queries, aggregations, comparisons, trend lines. If you want to know how many users signed up last week broken down by country, a good NLI will nail it.

But here's the catch: the hardest part of the translation problem isn't the SQL generation. It's the semantic layer underneath it.

An NLI that doesn't know your company's definition of "active user" will confidently return an answer — it just won't be your answer. It'll be the most statistically obvious interpretation of "active," not the business-specific one your team spent two weeks arguing over in a metrics review.

The best NLIs understand this. They don't just translate questions into SQL — they translate questions into SQL anchored to a governed semantic layer: a set of pre-defined, agreed-upon metrics, dimensions, and business logic. The translation still happens, but it happens once, up front, collaboratively — not ad hoc, in every query, by every analyst working from memory.

This is the architecture that actually reduces translation drift:

Business Intent

↓

Natural Language Interface

↓

Semantic Layer (governed metrics, shared definitions)

↓

SQL Generation

↓

Data Warehouse

Remove the semantic layer, and you're not solving the problem — you're just making it faster to produce wrong answers.

What Good Looks Like

The organizations that get analytics right aren't necessarily the ones with the most sophisticated tooling. They're the ones who've done the unglamorous work of reducing ambiguity at its source.

That means:

1. Defining metrics before building dashboards. Not "active users" as whatever-the-query-happens-to-count, but a formal, written, agreed-upon definition: Users who have performed at least one core action within the last 28 days, excluding internal team accounts. Store it. Version it. Cite it.

2. Making assumptions visible. Every analytical output should surface the key assumptions baked into it. Not buried in a footnote — in the headline. "This chart defines churn as voluntary cancellations only. Payment failures are excluded."

3. Closing the loop between question and answer. Before shipping an analysis, the analyst should be able to say: Here is what you asked. Here is what I interpreted that to mean. Here is what I built. Does this match your intent? This sounds obvious. Almost no one does it systematically.

4. Treating the semantic layer as a product. Your metric definitions are infrastructure. They deserve the same care as your data pipelines — ownership, documentation, testing, and deprecation policies.

None of this is technically hard. All of it is organizationally hard. Which is why most teams skip it and wonder why their dashboards never seem to tell the same story twice.

The Real Skill in Analytics

SQL is learnable in weeks. Python is learnable in months. The translation skill — the ability to hold a fuzzy business question, extract the analytical intent behind it, make the definitional choices explicit, build the right query, and communicate the output with appropriate caveats — takes years to develop, and it's almost impossible to hire for directly.

It's also the skill that's hardest to automate away, because it's fundamentally a communication skill wearing a technical costume.

The future of analytics tooling isn't about making SQL obsolete. It's about compressing the translation surface — making it easier to get shared definitions in place, surfacing assumptions automatically, and letting both the business person and the analyst stay closer to the question they actually care about.

The best data teams aren't the ones who write the cleverest queries. They're the ones who've built the clearest shared language between the people who have questions and the systems that can answer them.

SQL was never the problem. It was always the conversation around it.